Insights on AI Hardware Strategy, Edge AI Architecture, and Semiconductor Innovation

Lighthouse AI Technologies Files Provisional Patent for Adaptive Edge AI Architecture

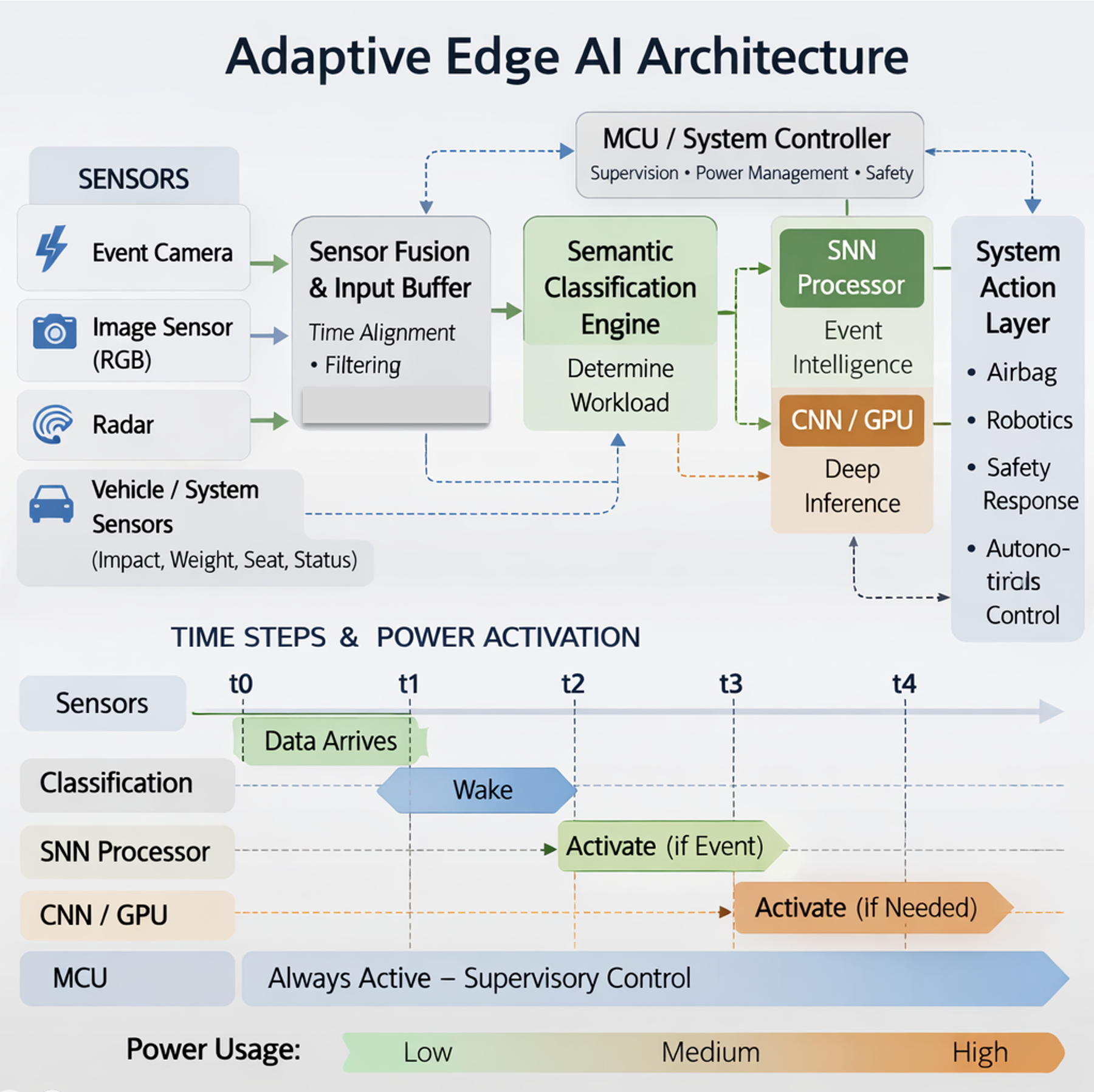

Conceptual architecture illustrating adaptive workload routing across heterogeneous edge compute resources.

Lighthouse AI Technologies recently filed a U.S. provisional patent application covering an adaptive edge computing architecture designed to improve how artificial intelligence systems operate in power-constrained environments.

The concept explores how semantic interpretation of sensor inputs could dynamically route workloads across heterogeneous compute resources at the edge. By selectively activating different inference engines based on the nature of incoming data, the architecture aims to balance power consumption, latency, and system responsiveness.

As AI continues moving from centralized cloud infrastructure to distributed edge environments, system-level orchestration of compute resources will become increasingly important. Edge AI platforms must manage diverse workloads across sensors, processors, and accelerators while operating within strict energy and performance constraints.

This work reflects Lighthouse AI Technologies’ ongoing focus on AI hardware strategy, edge inference architectures, and semiconductor system innovation.

More insights on AI hardware and edge computing architectures will be shared here as the field continues to evolve.

The Gravity of AI: Why Intelligence Is Moving From the Cloud to the Edge

Why power, latency, and deployment economics are reshaping where AI runs.

As AI expands into vehicles, healthcare, industrial systems, and infrastructure, intelligence increasingly needs to operate closer to where data is created. This piece explores why power, latency, and deployment economics are pushing AI from centralized cloud models toward edge and hybrid architectures.

Read the full article and discussion on LinkedIn →

AI at Scale Is Forcing a Rethink of Power and Performance

Practical perspectives on AI, edge intelligence, and real-world deployment challenges.

Imagine driving on a dark road when a moose steps into your lane.

There’s no time to send data to the cloud.

The vehicle must detect, decide, and react instantly.

Now imagine a wearable detecting a dangerous heart rhythm — or a fall in the home of an elderly patient.

These decisions can’t wait either.

As AI moves into the physical world — vehicles, healthcare, factories, and infrastructure — intelligence increasingly needs to operate in real time, close to where events occur.

This is shifting focus from raw performance toward efficiency, latency, and reliability.

At the edge, ultra-low-power intelligence enables immediate response where connectivity and energy are limited.

At hyperscale, power consumption is becoming a dominant driver of total cost of ownership and operation. Data centers are gravitating toward regions with lower land and energy costs, while more efficient processing approaches are being explored to manage energy density and operating expense.

AI isn’t just scaling in capability.

It’s forcing a rethink of where and how intelligence runs.

Less about more compute.

More about smarter placement of intelligence.

Read the full article and discussion on LinkedIn ->

Additional Perspectives

Managing Risk in Product & Project Development

Successful product development is not just about innovation — it’s about identifying risk early, aligning stakeholders, and maintaining execution discipline. In complex environments, unmanaged risk often becomes the primary driver of cost overruns, delays, and program failure.

Being Deliberate About AI Adoption

AI delivers the greatest value when applied with clear purpose and measurable outcomes. Organizations benefit most when AI initiatives are aligned with operational realities and business objectives — rather than deployed simply because the technology is available.